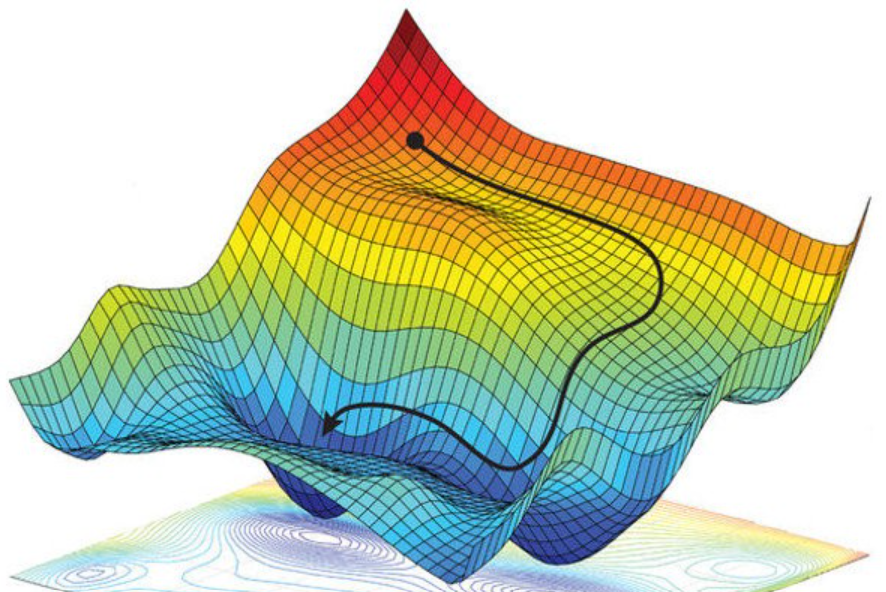

The Radio Knobs of AI: Weights, Biases, and the Learning Loop

Understanding how AI models actually learn through weights, biases, gradients, and the training loop.

A curated collection of technical explorations, architectural insights, and research findings from the intersection of AI and distributed systems.

Try adjusting your search terms

Understanding how AI models actually learn through weights, biases, gradients, and the training loop.

Breaking down what tensors actually are and why they're the foundation of machine learning.

Understanding how AI models measure their mistakes and learn from them.

Diving into One-Hot encoding, smart data splitting, and the trap of the single metric.

How neural networks use embeddings to conquer NLP and tabular data.

A beginner's guide to MCP, the open standard connecting AI to the world.